How to Leverage Claude for Data Analysis

Anthropic’s Claude Code helped a sales team produce a full data‑analysis case study in under an hour, turning natural‑language goals into Snowflake SQL without direct data access. By leveraging an existing dbt project, Claude iteratively generated and refined queries, quickly resolving the few issues that arose. The exercise highlighted how well‑documented data models empower AI agents to automate analytics workflows. The author shares the exact prompts used, demonstrating a repeatable process for rapid insight generation.

6 Ways to Extract Data From Salesforce Data Cloud (Updated 2026)

Salesforce Data 360, the fastest‑growing component of the Salesforce ecosystem, now supports over 300 native connectors for ingesting any data type. The platform offers six distinct ways to export that unified data: Data Activations, Data Actions, Flow‑triggered HTTP callouts, zero‑copy...

Building Declarative Data Pipelines with Snowflake Dynamic Tables: A Workshop Deep Dive

Snowflake’s recent workshop taught data engineers how to build declarative pipelines using Dynamic Tables, which automate refresh logic, dependency tracking, and incremental updates. Participants created synthetic datasets, staged transformations, and a fact table, observing real‑time performance on 10,000 order records....

Day 46: Time-Based Windowing for Real-Time Log Aggregation

The post walks through building a production‑grade time‑based windowing engine for real‑time log analytics, covering tumbling, hopping and session windows, a metrics calculator, late‑data handling, and RocksDB‑backed state persistence. It demonstrates sub‑100 ms latency while processing over 50,000 events per second...

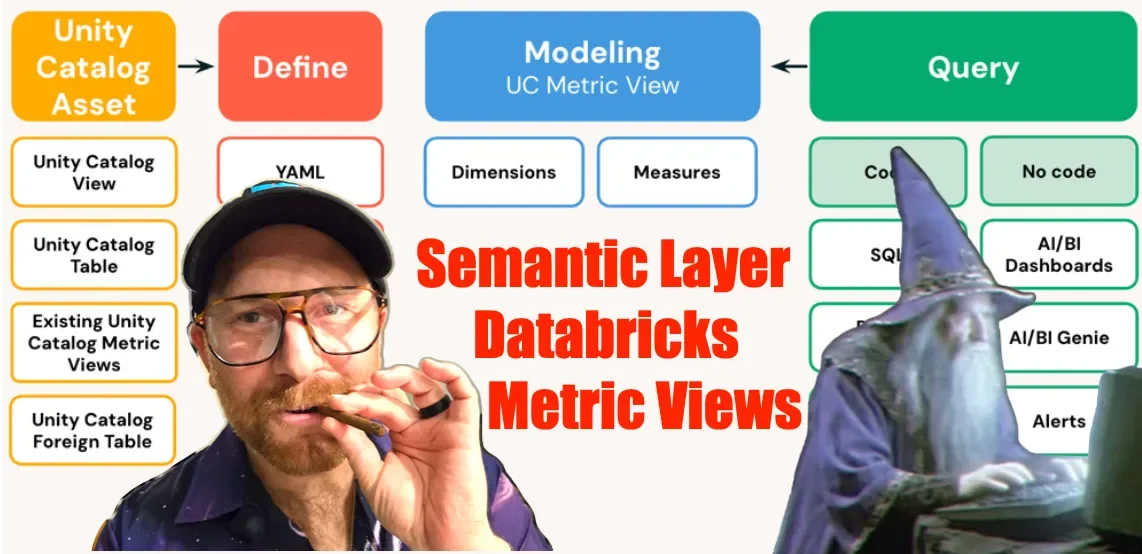

Databricks Metric Views and the Reality of the Semantic Layer

Databricks introduced Metric Views, a Unity Catalog‑based feature that centralizes metric definitions and dimensions. By storing business logic as reusable objects, teams can apply consistent calculations across SQL queries, dashboards, and AI‑driven tools. The YAML‑like syntax makes metrics human‑readable while...

Polars’ Streaming Engine Is a Bigger Deal Than People Realize

Polars' new streaming engine dramatically improves performance, halving runtimes on moderate datasets and delivering up to four‑times speedups on a 12 GB workload compared with eager execution. The library supports eager, lazy, and streaming modes, with lazy enabling predicate pushdown and...

Communiqué 110: Our Knowledge Ecosystem Takes a Giant Leap

Communiqué announced Communiqué OS, an operating system that consolidates data, intelligence and resources for Africa’s creative economy. The platform builds on a database of over 1,000 verified entities across 54 markets and adds a health index, capital‑flow tracker and policy...

Atomic Transactions in Databricks Spark SQL

Databricks announced that Unity Catalog now supports atomic transactions for managed Delta tables, entering public preview, while Iceberg tables remain in private preview. The feature introduces classic SQL transaction commands—BEGIN TRANSACTION, COMMIT, and ROLLBACK—directly in Spark SQL, extending the platform’s...

DuckDB, AI, and the Future of Data Engineering | with Staff Engineer, Matt Martin

DuckDB is emerging as a mainstream in‑process analytical engine, allowing SQL queries to run directly inside Python, R, or Julia without a separate server. Staff Engineer Matt Martin highlighted how its columnar storage and vectorized execution deliver warehouse‑level performance on...

Day 45: Implement a Simple MapReduce Framework for Batch Log Analysis

The post outlines a production‑grade MapReduce framework that handles a full map‑shuffle‑reduce pipeline for batch log analysis, processing millions of events. It features a coordinator‑worker model with automatic task retries and a partitioned storage backend for efficient shuffling. While Kafka...

You Don't Need Permission to Fix Your Data

A junior engineer named Sam quietly added data quality tests to a warehouse model, illustrating that fixing data doesn’t require formal permission. The article argues that data quality problems cost enterprises billions and consume a large share of engineers' time....

Nvidia GTC 2026: DDN Launches IndustrySync Pipelines for Financial Services and Life Sciences AI

DDN announced IndustrySync Pipelines, pre‑integrated AI data workflows for Financial Services and Life Sciences, deployable on its HyperPOD platform in days instead of months. The Financial Services pipeline promises up to 150× faster risk simulations and five‑minute risk metric refreshes,...

Datadobi Announces Early Access Program for Data Access Review

Datadobi has launched an Early Access Program for Data Access Review, a new permissions‑intelligence capability for its StorageMAP platform. The feature adds visibility into who can access unstructured data, helping organizations spot excessive, outdated, or inappropriate rights. Selected current StorageMAP...

Day 44: Real-Time Monitoring Dashboard with Kafka Streams

The post walks through building a production‑grade real‑time monitoring dashboard that ingests over 40,000 events per second using Kafka Streams. It shows how windowed aggregations, percentile calculations, and anomaly detection run on RocksDB‑backed state stores with exactly‑once guarantees. The stream...

Nvidia Plans to Make All Unstructured Data Structured

Nvidia announced a plan to structure hundreds of zettabytes of unstructured data each year, turning it into the ground‑truth foundation for artificial intelligence. The initiative relies on confidential computing, ensuring that even the platform operator cannot view the raw data....

Polars Powerful Streaming Engine

Polars’ new streaming engine offers a single‑node, Rust‑based alternative to heavyweight distributed frameworks like Spark. By applying lazy query optimisation and batch‑wise materialisation, it delivers low‑latency ETL pipelines while dramatically cutting hardware costs. Early adopters have swapped Spark jobs for...

Day 149: Orchestrating Your Log Processing Empire with Kubernetes

The post walks readers through turning a complex, distributed log‑processing stack—collectors, RabbitMQ, query engines, and storage—into a single Kubernetes deployment. By providing complete manifests, it shows how to launch the entire ecosystem with one command, while Kubernetes handles health checks,...

Understanding and Improving Data Repurposing

The authors introduce data repurposing as the practice of applying existing datasets to tasks that were not envisioned at collection time. They differentiate repurposing from traditional data reuse, emphasizing new analytical goals and contextual shifts. A structured framework is presented,...

Day 43: Implement Log Compaction for State Management

The post outlines a production‑grade state management layer built on Kafka log‑compacted topics, featuring a keyed state producer, a consumer that materializes current snapshots, and a Redis‑backed query API. By retaining only the latest record per entity key, log compaction...

Akave Raises $6.65M to Challenge Traditional Cloud Storage with Compute-Agnostic Platform Built for AI Applications

Akave has launched Akave Cloud, a decentralized, S3‑compatible storage platform built on Avalanche’s Layer 1 blockchain, targeting AI and analytics workloads. The service offers flat‑rate pricing at $14.99 per terabyte per month with zero egress fees, on‑chain verifiable audit trails, and...

Governing Real-World Health Data as a Public Utility

The article proposes governing real‑world health data as a public utility, using federated, standards‑based, community‑driven models to overcome fragmentation, proprietary control, and weak oversight. It cites ARPA‑H’s interest in economic models and highlights existing distributed networks and research enclaves as...

Claude Code Isn't Going to Replace Data Engineers (Yet)

Rob Moffat tested Claude Code’s ability to generate a full dbt project for UK flood‑monitoring data. Using a concise prompt, the model produced a complete project structure, passed all dbt tests, and even fixed its own build errors. However, the...

Day 148: Natural Language Queries with NLP - Ask Your Logs Anything

The blog announces a natural language query engine for log platforms, letting users ask questions like “show me errors from payment service in the last hour” and receive instant results. By converting conversational intent into optimized SQL, the system removes...

Sovereign Data Supply Chain: Functional and Operational Framework

The Sovereign Data Supply Chain: Functional and Operational Framework version 1.0 proposes a structured governance model for data originating from indigenous and local territories. It aims to replace extractive data practices with sovereign, rights‑based chains across Latin America and the Caribbean....

Data Integration Challenges (And How Enterprise Brands Solve Them in 2026) – Shopify

Enterprise commerce brands are losing speed and revenue because data remains trapped in siloed systems, creating integration gaps that delay decisions and cause inventory errors. Gartner predicts 94 % of CIOs will overhaul strategies within two years, yet less than half...

Databahn Highlights Accelerating Enterprise Momentum Across AI-Native Data Pipelines

Databahn, an AI‑native data pipeline platform, reported rapid enterprise traction, with Fortune 500 customers now representing over half its base. The company posted more than 400% year‑over‑year revenue growth and nearly 200% of its ARR coming from retained customers. Growth is...

Talk to Your Data | T-Systems Unveils AI Agent to Accelerate Analysis Work

T‑Systems launched “Talk to your data,” an AI‑driven chat platform that connects disparate corporate data sources, searches, analyzes, and visualizes information on demand. The solution uses an ontology layer to map data across systems, enabling natural‑language queries. Pilot projects, including...

Day 42: Exactly-Once Processing Semantics in Distributed Log Systems

The post details a new Kafka‑based log pipeline that guarantees exactly‑once processing, eliminating duplicate handling even during failures. It combines idempotent producers, transactional consumer commits, a Redis‑backed deduplication layer, and a state‑reconciliation service to create an end‑to‑end exactly‑once flow. The...

The Pipes Are Finally Moving: Why Clinical Event Streaming Is the Infrastructure Bet Nobody Took Seriously Enough

The healthcare data landscape is finally moving from three‑decades of batch ETL to event‑driven pipelines powered by Kafka, Flink and modern cloud services. Legacy systems were built around billing cycles, leaving clinicians without real‑time data for urgent decisions. Recent API...

You Don't Need Permission to Fix Your Data

The article argues that data quality improvements don’t require top‑down mandates; engineers can start fixing messy source data by writing tests, documenting issues, and building simple dashboards. By turning test failures into evidence, teams persuade source‑system owners to add validation,...

Is Your ERP a Data Graveyard: How to Unlock Millions with Nauta’s Valentina Jordan

Nauta’s AI‑native operating system overlays existing ERP, TMS and WMS platforms to turn fragmented supply‑chain data into a single, live source of truth. By ingesting emails, PDFs and spreadsheets, the platform eliminates “data graveyards” and delivers SKU‑level visibility and automated...

A Guide to Kedro: Your Production-Ready Data Science Toolbox

QuantumBlack’s open‑source Kedro framework helps data scientists move from exploratory notebooks to production‑ready pipelines. The guide walks users through installing Kedro, setting up a project, defining a data catalog, building pipelines with nodes, and configuring parameters. It also covers optional...

From Data Ambition to Public Value

Governments have moved past debating data use and now face the challenge of governing data responsibly in an AI‑driven era. The article argues that traditional, technocratic data strategies fall short because they prioritize compliance over legitimacy, privacy, and public trust....

MWC 2026: Huawei New-Gen OceanStor Dorado Converged All-Flash Storage Passes Enterprise Strategy Group Technical Validation

Huawei's New‑Gen OceanStor Dorado Converged All‑Flash Storage received technical validation from Enterprise Strategy Group (ESG). ESG's tests showed the system delivering over 876,000 IOPS with a 32 µs average latency in a high‑concurrency database workload. The architecture supports active‑active failover, tolerates...

Unlocking the Power of Public Sector Data by Overcoming Common Strategy Pitfalls

Public sector organisations view data as a strategic asset, yet many treat data strategy as a one‑off document that quickly becomes obsolete. The article outlines common pitfalls—treating strategy as paperwork, ignoring people and culture, lacking clear purpose, and failing to...

Day 146: Time Series Database Integration - Turning Logs Into Queryable Metrics

Today's post highlights the shift from raw log files to queryable metrics using time‑series databases. It explains why traditional relational databases falter with high‑write, append‑only workloads and showcases InfluxDB and TimescaleDB as purpose‑built solutions. The article illustrates how these databases...

Databricks Overtakes Snowflake

Databricks has overtaken Snowflake in quarterly revenue, now leading by $120 million after a $220 million gap two years ago. The shift is driven by AI’s demand for unstructured data, which Databricks processes directly from object storage without migration. Databricks SQL grew...

Purpose Drives Design: Functions of a Statewide Longitudinal Data System

Statewide longitudinal data systems (SLDS) can boost education and workforce outcomes, but designs vary based on intended functions—public reporting, research analytics, and individual support. The brief by Stefaan Verhulst explains how policymakers can align system architecture, governance, and legal frameworks...

Uncovering Hidden Fraud Networks

Entity resolution, knowledge graphs, and geospatial analytics together dismantle hidden fraud networks across government programs and insurance lines. By linking fragmented records—tax filings, social media, transaction logs—into unified 360‑degree profiles, investigators can spot duplicate registrations, synthetic identities, and collusive entities....

QBO Cloud and MinIO Collaborate to Deliver Enterprise-Grade Object Storage for Modern AI and Analytics Workloads

QBO Cloud and MinIO announced a joint solution that merges QBO’s bare‑metal cloud platform with MinIO’s AIStor, an exascale, S3‑compatible object storage system. The partnership delivers a unified, high‑performance data layer designed for modern AI and analytics workloads, emphasizing scalability,...

Data Readiness – Why, and How, Your Data Will Make or Break AI Success in 2026

Legal technology leaders are hosting a March 18 webinar to dissect "data readiness" as the decisive factor for AI success in the legal sector by 2026. They argue that fragmented repositories, inconsistent metadata, and weak governance are the primary obstacles...

Modern Cloud Analytics in 2026: Architecture, Use Cases, and Pitfalls – Shopify

Shopify’s 2026 guide outlines what makes cloud analytics truly modern, emphasizing a cloud‑native stack that couples elastic compute with a semantic layer, governed self‑serve, and built‑in FinOps. It contrasts legacy on‑prem and “cloud‑washed” BI with a fully modular architecture that...

5 Useful Python Scripts for Automated Data Quality Checks

The article presents five open‑source Python scripts that automate common data‑quality checks, ranging from missing‑value analysis to cross‑field consistency validation. Each tool reads CSV, Excel or JSON inputs, applies schema‑driven rules or statistical methods, and generates detailed reports with actionable...

Spark, Lakehouse & AI: A Deep Conversation with Bart Konieczny

In a recent Data Engineering Central podcast, Bart Konieczny discussed the evolving synergy between Apache Spark, lakehouse architectures, and artificial intelligence. He highlighted Spark's latest performance enhancements, including Catalyst optimizer refinements and native GPU acceleration. Konieczny explained how lakehouses bridge...

2028 - THE GREAT DATA RECKONING

The memo from Reis Megacorp outlines a 2028 scenario where AI agents can design, test, and deploy end‑to‑end data pipelines, rendering many data‑tooling jobs obsolete. By mid‑2027 the data labor market split: elite engineers commanding $400K+ salaries, a middle tier...

5 Python Data Validation Libraries You Should Be Using

Data validation is gaining prominence as pipelines become more complex, and Python now offers a diverse set of libraries to address this need. The article reviews five tools—Pydantic, Cerberus, Marshmallow, Pandera, and Great Expectations—each targeting a different validation paradigm, from...

Speedata Partners with Nebul to Bring High-Performance Big Data and AI Analytics to European Sovereign Cloud

Speedata Ltd. announced a partnership with Nebul to integrate its purpose‑built Analytics Processing Unit (APU) into Nebul’s European sovereign cloud. The APU claims up to 100× performance gains over CPUs and GPUs for Apache Spark workloads, cutting server counts and...

The Alternative Data Arms Race: Why Hedge Funds Are Spending More Than Ever:

In 2026 hedge funds are pouring tens of millions of dollars into alternative data, turning information velocity into a core competitive lever. AI-driven analytics have lowered the barrier to processing vast datasets—from satellite imagery to web traffic—shifting the edge toward...

Metadata, Measurement, And The Evolution To Data Infrastructure

TiVo has pivoted from its legacy DVR brand to a data‑infrastructure player, leveraging its deep content metadata and household viewership signals. The company emphasizes independent, comprehensive data that uniquely combines the "what" (metadata) and the "who" (audience behavior) across linear...

How Clean Connected Data Improves Pricing and Distribution

Hospitality operators often juggle disparate systems—PMS, channel managers, and finance—resulting in conflicting reports and gut‑driven decisions. The article argues that clean, connected data unifies these sources, delivering a single source of truth for pricing, distribution, marketing, and staffing. By standardising...