How IonStrike Could Help US Military Better Manage Evolving Drone Threats?

The U.S. Army’s 52nd Air Defense Artillery Brigade is conducting operational assessments of DZYNE Technologies’ IonStrike interceptor in Europe, part of the Eastern Flank Deterrence Initiative. The system is being evaluated as an intermediate‑layer air‑defense option positioned between electronic‑warfare measures and high‑cost missile interceptors. IonStrike is designed for counter‑UAS missions, offering a kinetic solution that costs a fraction of traditional missiles such as the $200,000 FIM‑92 Stinger used against $40,000 loitering munitions. Its infrared seeker, proximity‑fused warhead and mid‑flight retargeting enable reliable kills against maneuvering, low‑observable drones while preserving munition expenditure. Maj. Cody Davis emphasized that the interceptor “does not require Soldiers to learn a new kill chain,” integrating with existing radar feeds and command‑and‑control systems. Maj. Benjamin Bowman added that the summer assessment will test integration, launch through current C2, area coverage, in‑flight reallocation, and sustainment in the field. If successful, IonStrike could reshape short‑range air defense by providing a scalable, cost‑effective layer against massed drone swarms, encouraging NATO allies to adopt similar solutions. Future variants may incorporate AI‑driven targeting and autonomous swarm engagement, reinforcing the Army’s shift toward adaptable, distributed air‑defense architectures.

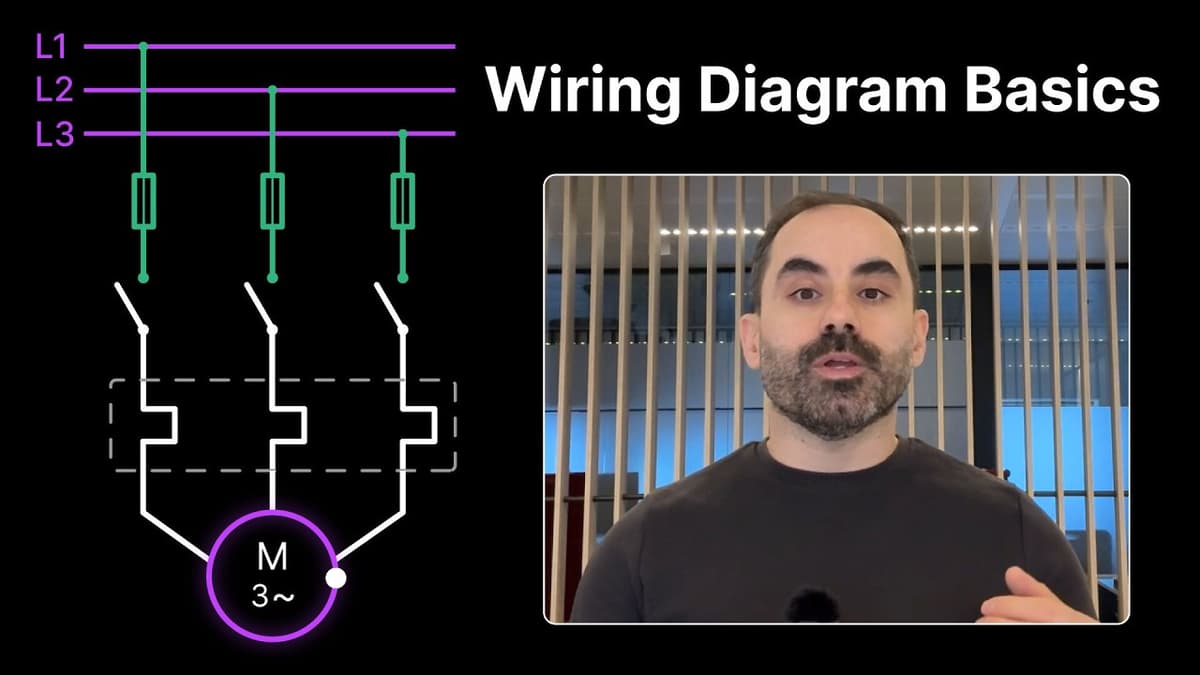

3-Phase Motor Wiring Diagram Basics

The video explains a basic three-phase motor wiring diagram, showing power supply lines L1, L2 and L3 feeding the motor through protective fuses and a three-contact contactor. It details how fuses protect the circuit by sacrificing a thin metal link...

NTU Scientists Develop Seed-Sized Surgical Robot

Researchers at Nanyang Technological University in Singapore have unveiled a seed‑sized robot that can be injected into the body and perform a suite of surgical tasks. The device is steered by external magnetic fields that can be modulated to move, cut...

Ground Robots in Latvia and the History of Manned-Unmanned Teaming

The breakout episode spotlights two fronts of unmanned warfare: ground robots being field‑tested in Latvia’s dense woods and the historical roots of manned‑unmanned teaming. Elizabeth Goslamo reports from NATO’s Crystal Arrow exercise, where UGVs equipped with Starlink struggled to maintain...

Underwater Robot Measures Ice Thickness

The video showcases a Zermatt test of an underwater robot designed to measure ice thickness from below, eliminating the need for personnel to step onto potentially hazardous ice surfaces. The robot, nicknamed Polaris, is housed in a torpedo‑shaped shell containing electronics,...

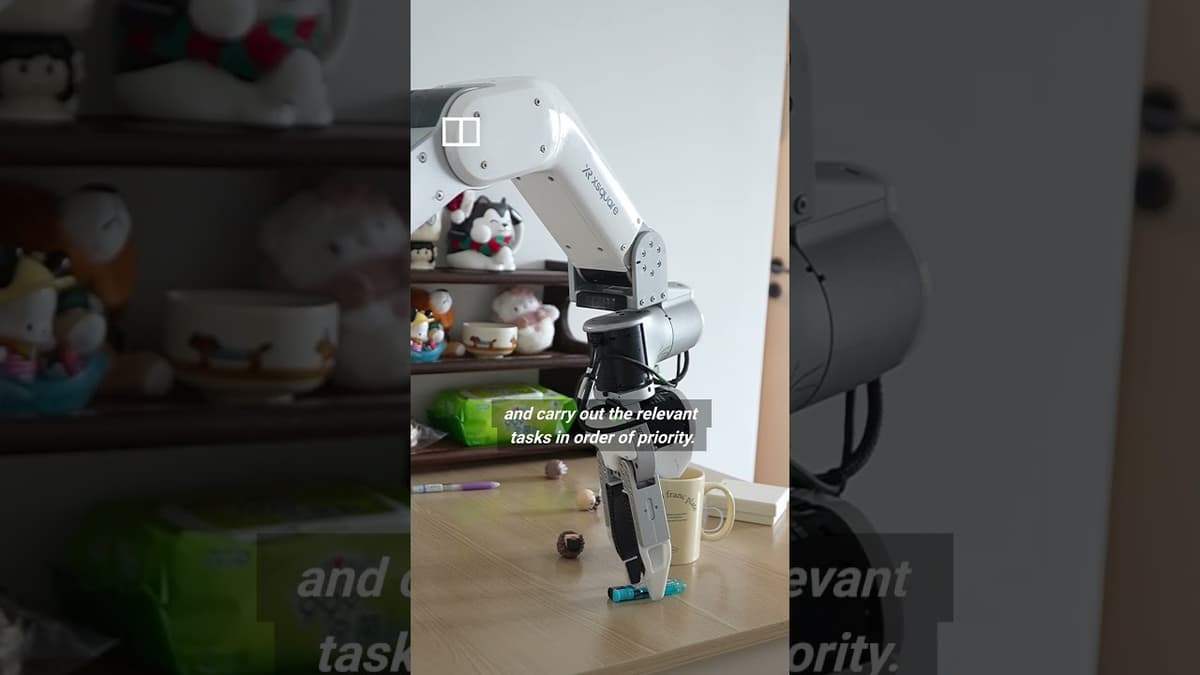

Meet China’s Home-Cleaning Robot

Shenzhen startup X-square Robot is piloting a home-cleaning service pairing human cleaners with a 1.5-meter robot assistant that handles repetitive tasks—wiping, folding and simple bin changes—while humans tackle hard-to-reach or technical chores. An on-site engineer provides technical support, reflecting the...

The Robot Dog Changing Industrial Safety 🤖🐕 @ANYbotics

The video showcases ANYbotics’ four‑legged inspection robot paired with a photorealistic digital twin of Vermense’s waste‑to‑energy incineration plant in Germany. The robot dog can navigate confined, hot boiler rooms that are unsafe for humans, while operators control it from a...

Singapore Police Force Rolls Out AI and Autonomous Tech to Boost Operations

Singapore’s Police Force is introducing a suite of AI‑driven and autonomous technologies to augment its operational capabilities. The rollout includes eight aerial drones for hard‑to‑reach surveillance, an unmanned boat capable of self‑navigation and docking, and service robots already patrolling Changi...

Can a Robot Make World-Class Coffee? Meet Artly’s AI Barista

At Muji in Portland, Artly showcased Jarvis, an AI-driven robotic barista trained by world-champion barista and co-founder Joe Yang to reproduce craft coffee at scale. The system encodes Yang’s techniques to deliver consistent, high-quality espresso and latte art, aiming to...

Robots… but Make It Anatomy Class #automation

The video offers a rapid “robot anatomy” tutorial, likening mechanical components to human body parts to demystify automation for a general audience. It explains that actuators act as muscles, converting electrical, pneumatic or hydraulic energy into motion; robot wrists are multi‑axis...

From Video Games to Battlefield: Ukraine's Drone Pilots Forged by Gaming Culture

Ukrainian forces are turning a generation of gamers into drone operators, as a recent video shows a drone‑racing competition in western Ukraine where soldiers who grew up on titles like Liftoff, Counter‑Strike and Mortal Kombat now pilot combat drones against...

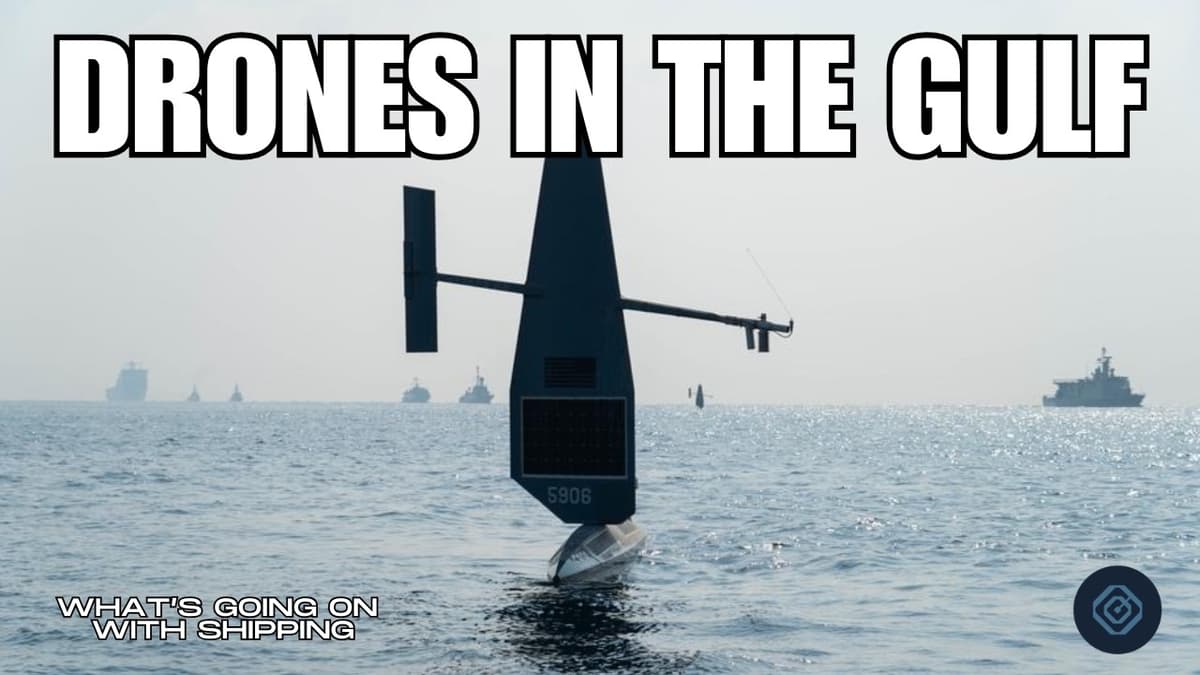

Mission Critical: Drones Helping in Hormuz | WGOWS Guests on a New Podcast

The episode focuses on the urgent mission to restore safe passage through the Strait of Hormuz, highlighting how unmanned systems have become the linchpin of that effort. Iran’s use of land‑launched UAVs and fast‑attack boats has created a sea‑denial...

Stanford Robotics Seminar ENGR319 | Spring 2026 | Interactive Autonomy

The Stanford Robotics Seminar focused on interactive autonomy, emphasizing the need for robots to interact safely and intelligently with humans and other agents across domains such as warehouses, manufacturing, and drones. The speaker highlighted that successful interaction requires joint prediction...

In Ukraine, Ground Drones Are Revolutionizing War and Saving Lives | WSJ

The Wall Street Journal report highlights how Ukraine’s un‑manned ground vehicles (UGVs) are becoming a cornerstone of its war effort, performing tasks from supply runs to battlefield reconnaissance. The drones can carry payloads comparable to a pickup truck, are operated with...

The Stage | Markus Baumann, Chief Corporate Strategy Officer, CMR Surgical

Markus Baumann, Chief Corporate Strategy Officer at CMR Surgical, outlines the company’s rapid ascent in the surgical‑robotics sector. CMR, a British scale‑up, now sits second worldwide in soft‑tissue robotic systems, driven by its Versius platform—a portable, modular, ergonomically designed alternative...

Inside Taiwan’s Plan To Bring AI Robots Into Daily Life|TaiwanPlus News

Taiwan’s government unveiled a new smart robotics hub in Tainan, part of a broader ten‑initiative AI plan aimed at moving artificial intelligence out of silicon chips and into real‑world settings such as factories, hospitals, and homes. The hub’s data center showcases...

ADI Flexibile Manufacturing Promo D10 101823

The video introduces Analog Devices’ (ADI) flexible manufacturing platform, positioning it as a response to evolving market pressures for smaller batch sizes, product personalization, and more resilient supply chains. It frames the modern factory as a digital ecosystem where robots...

Why Factories Use This Robot vs That Robot #automation

The video explains how manufacturers decide between SCARA robots and articulated robot arms, two of the most common industrial manipulators. SCARA units move like an arm from the elbow down, sliding side‑to‑side and up‑down on a fixed plane but without wrist...

Industrial Automation Control Systems 101

The video provides a foundational overview of Industrial Automation Control Systems (IACS), detailing how hardware and software components work together to manage automated machinery. It walks through the basic architecture—input devices, input modules, a logic module (typically a PLC), output...

WAREHOUSE TRANSFORMATION: Automation Investments Have Helped some Firms Amid Global Disruptions

The video highlights how logistics companies are overhauling warehouse operations with automation to counter global supply‑chain shocks and rising costs. Deployments such as automated storage and retrieval systems (ASRS) and AI‑driven warehouse management have lifted usable space at Singapore Pharma Tech...

Why We’re at the Beginning of the AI Hardware Boom | Caitlin Kalinowski (Ex–OpenAI, Meta, Apple)

The episode spotlights the emerging AI hardware boom, featuring veteran hardware architect Caitlin Kalinowski—formerly of Apple, Meta, and OpenAI. Kalinowski argues that the rapid vertical acceleration of AI models will soon hit a saturation point in purely software‑driven tasks, pushing...

Figure CEO Says No Teleoperation in Their Humanoid Robot Testing

Figure’s CEO used a live‑streamed test to prove its humanoid robots can operate without any tele‑operation, relying solely on the in‑house Helix‑2 neural network. Over the past fifty hours the fleet handled roughly 60,000 packages, swapping batteries and taking over...

VINAMAC EXPO 2026 Drives Industrial Innovation | VINAMAC EXPO 2026 - Thúc Đẩy Đổi Mới Sáng Tạo

The VINAMAC EXPO 2026 centered on accelerating industrial innovation in Vietnam, with a particular emphasis on automation, smart technologies, and advanced welding solutions. Organisers used the platform to showcase how emerging robotics, cobots, and AI-driven systems can modernise the country’s...

R2-Dirt2? Robots Afield in California

A new autonomous robot is being deployed in California orchards to monitor soil moisture and help growers manage water use more efficiently. The robot travels between trees, measuring soil electrical conductivity—a proxy for moisture, salinity, and texture. These readings are fused...

WWII Transformed Dutch Agriculture Forever 🍅🤖 #automation

The video explains how a post‑World War II famine prompted the Netherlands to pour resources into high‑technology agriculture, turning scarcity into a catalyst for innovation. By investing heavily in automation, robotics, and data‑driven farming, the Dutch turned a small, land‑constrained country...

Can Trusted Partners Help Secure U.S. Drone Supremacy?

The CSIS discussion with deputy director Clayton Swopee and Moroccan‑American drone entrepreneur Sufyan Amagi examines whether trusted partners can help secure U.S. drone supremacy, contrasting onshoring with a distributed, allied‑based production model. They argue that dependence on Chinese components has spurred...

Tech Podcast: Making AI Physical and Real | PowerUp

The PowerUp podcast episode spotlights Infinian Technologies’ role in turning artificial intelligence into tangible, real‑world robotics. Host Aliyia Shokat interviews Mariana Bukisc, director of marketing, who explains how advanced sensing, high‑speed processing, and precise actuation—anchored by semiconductor technology—enable robots to...

Singapore Police Force Exploring Use of Jet Packs, Armed Drones in Special Operations

Singapore Police Force is testing jet packs and armed drones for use in special operations, including maritime hostage and hostile vessel scenarios. Officials showcased a demonstration highlighting how these technologies could enhance response capabilities around the city-state’s busy ports. Leadership...

How Dragon Cart Program Is Set to Provide Game Changing Capability to US Military ?

The Air Force Research Laboratory’s Rapid Dragon concept has been rebranded as the Dragon Cart program and officially designated a Program of Record, signaling its transition from experimental testing to a funded, fielded capability slated for 2027. Dragon Cart turns existing...

2026 Spring Robotics Colloquium: David Held (Carnege Mellon University)

David Held of Carnegie Mellon outlined research toward robot manipulation that is both precise and generalizable, arguing that foundation models have achieved broad world knowledge but lack the task-level accuracy specialist systems provide. He presented ArticuBad, a simulation-generated dataset of...

Real-Life Transformer?! Unitree’s World-First Mass-Produced Piloted Mech GD01 ($650K)

Chinese robotics firm Unitree (Unit Train Robotics) unveiled the GD01, a mass‑produced, piloted transforming mech that can carry a human operator and shift between bipedal and quadrupedal configurations. The GD01 is priced from $650,000 (approximately 3.9 million RMB) and weighs about 500 kg...

These AI-Powered Robot Hands Can Solve a Rubik's Cube and Make Breakfast

The video spotlights a pair of AI‑powered robotic hands that can both solve a Rubik’s Cube and whisk up a basic breakfast. Built on a combination of high‑resolution cameras, reinforcement‑learning algorithms, and dexterous actuators, the system demonstrates unprecedented fine‑motor capability...

Is Ukraine Winning the War? Inside Ukraine’s 2026 Counter-Offensive

The video examines Ukraine’s 2026 counter‑offensive, arguing that a trio of technologies—Starlink, mass‑produced drones, and the home‑grown Delta command system—has turned the tide against Russian forces. When Elon Musk cut Russian access to Starlink in February, roughly 90 % of Russian units...

Navy’s Ashley Elizabeth Evans Talks Future-Ready Automation

In a UiPath Fusion public‑sector interview, Deputy Director Ashley Elizabeth Evans outlined the Navy’s roadmap for scaling automation from isolated pilots to enterprise‑wide, mission‑driven solutions. The service has run a six‑year robotic process automation (RPA) program, deploying more than 500 bots...

High Precision Excavator Control

The video introduces an autonomous control stack designed for heavy‑duty excavator grading that delivers centimeter‑level surface accuracy, addressing the precision loss and torque under‑utilization of existing semi‑automatic systems. The solution splits into two modules: a hydraulics‑aware joint velocity controller that adapts...

China’s Unitree Unveils Manned Mecha

Chinese robotics firm Unitree Robotics held a live demonstration in Shanghai, unveiling its first manned mecha – a bipedal, pilot‑operated robot that resembles a scaled‑down version of a science‑fiction mech. The prototype stands about three metres tall and tips the scales...

Stanford Robotics Seminar ENGR319 | Spring 2026 | Unlocking Autonomous Medical Robotics

The Stanford Robotics Seminar explored the emerging field of autonomous surgical robots, framing the technology as a response to a growing shortage of skilled healthcare workers. The speaker highlighted that tens of thousands of surgeons and hundreds of thousands of...

What Warehouse Automation Actually Looks Like #robots

The video showcases a modern warehouse where autonomous mobile robots work side‑by‑side with human pickers, illustrating a collaborative rather than replacement model of automation. Workers load totes onto an induction station; the system then calculates the most efficient path for each...

Open vs Short Circuits Explained Simply

The video demystifies two prevalent electrical faults—open and short circuits—by contrasting their electrical signatures and outlining systematic diagnostic procedures for industrial technicians. An open circuit is characterized by infinite resistance and a complete interruption of current, typically caused by burnt components,...

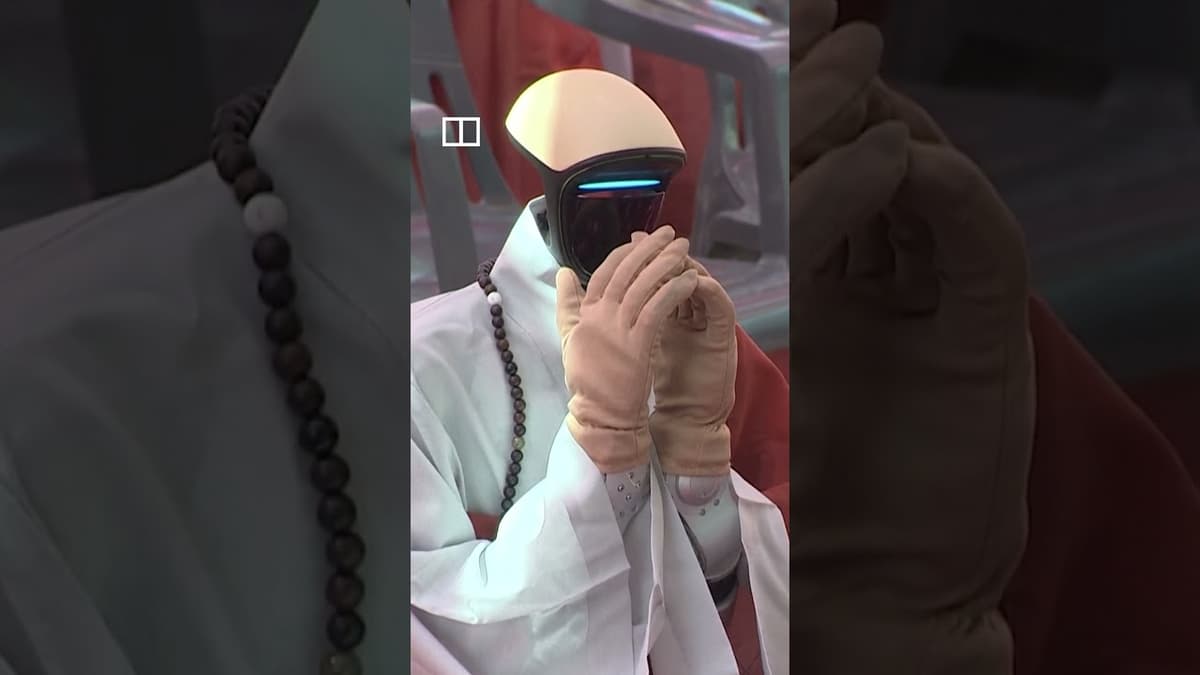

South Korea’s Debut First Humanoid Robot Monk

South Korea unveiled its first humanoid robot monk, named “Seongri,” at the Haeinsa temple, marking a historic blend of cutting‑edge robotics with Buddhist practice. The robot, standing 1.7 meters tall, uses speech‑synthesis AI to chant sutras, answer visitor questions in Korean,...

AI Robots Can Make Dim Sum. This Means China's Job Crisis Is Already Here.

The video reports that AI‑powered robots can now make dim sum, a traditionally labor‑intensive Chinese delicacy, and that restaurants in eastern China are already deploying them to cut costs. It highlights a regulatory response requiring tea houses to disclose whether dim...

OUST CEO on Future of Robotics, Autonomous Driving

Ouster reported a strong earnings quarter, beating revenue estimates and delivering 55% year‑over‑year product revenue growth, marking its 13th straight quarter of expansion. CEO Angus Pacala highlighted the company’s role as the “eyes of autonomy” across robotics, automotive and industrial...

I Tried to Automate My Entire Property (This Got Out of Control)

In this video the creator chronicles his journey from a modest smart‑home setup to an ambitious attempt to automate an entire rural property—including a vineyard, hillside, driveway and orchard. He recounts modest progress over winter—clearing vines, tidying the house area, and...

NYC's Port Authority Tests Manhattan to Brooklyn Drone Delivery

The Port Authority of New York and New Jersey has launched a pilot program testing drone deliveries from Manhattan to Brooklyn, marking one of the first large‑scale attempts to integrate aerial logistics into a dense urban environment. The trial uses lightweight quad‑copter...

The Boeing MQ-28: The Drone Everybody Wants.

The Boeing MQ‑28 Ghostbat, slated for service by 2028, is Australia’s first combat‑drone design since World War II. Developed jointly by the Royal Australian Air Force and Boeing Australia, the unmanned aircraft operates as a “loyal wingman,” allowing a single fighter...

South Korea Welcomes Its First Robot Buddhist Monk

South Korea has officially introduced Gavis, the nation’s first robot Buddhist monk, during a ceremony at a historic Seoul temple. The AI‑driven figure, created by a partnership between a robotics firm and Buddhist scholars, is designed to perform ritual duties...

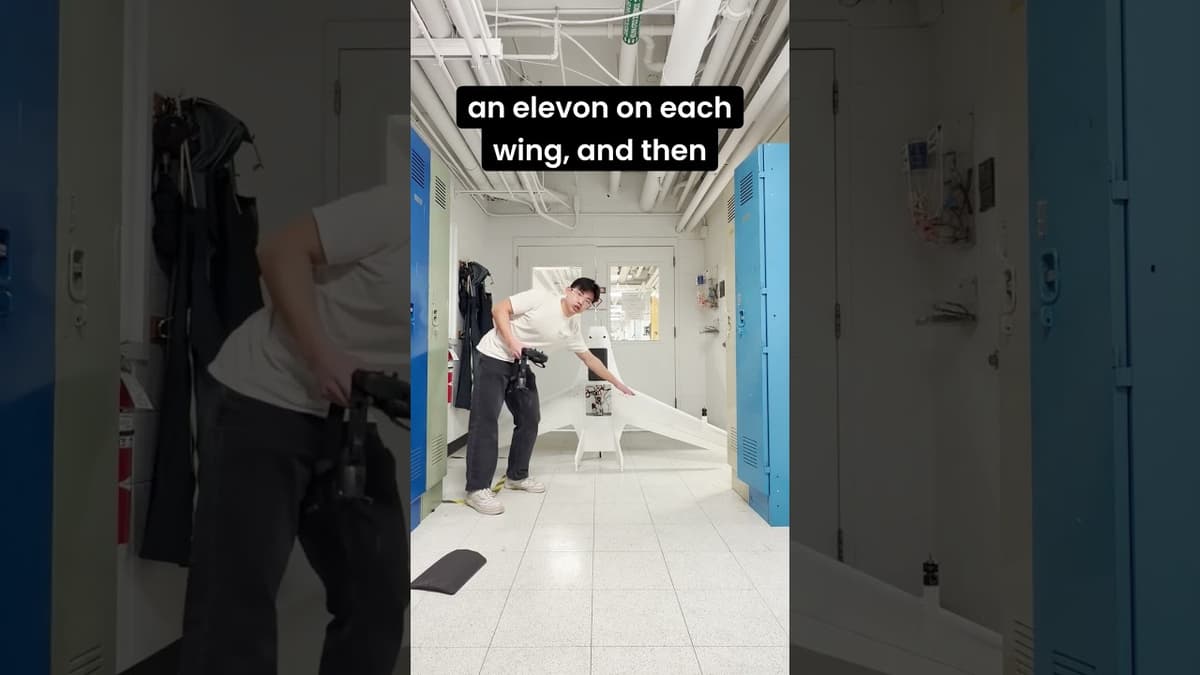

Alexander Liou's Blended-Wing Body Aircraft Senior Thesis Project

Alex Liou, a senior in mechanical and aerospace engineering, presented his thesis project: a blended‑wing‑body unmanned aircraft designed to transport a 1 kg blood product or plasma payload up to 24 km to frontline medics. The UAV combines vertical take‑off and landing...

Tutor Intelligence Demonstrates CasePick with Cassie

Tutor Intelligence showcased Cassie, a mobile autonomous robot that builds mixed‑SKU pallets without any upfront capital expenditure. The robot moves freely across a dedicated warehouse zone, docks to pallet dollies, and uses an intelligent arm to pick, weigh, measure, and...

Inside The Old Skydiving Plane Hunting Drones in Ukraine

Ukrainian volunteers have turned a Soviet‑era AN‑28 skydiving aircraft into a makeshift anti‑drone platform, mounting an American‑made 3,000‑rpm machine gun to intercept Russia’s Shahed loitering munitions. The crew highlights the stark economics: roughly $500 in ammunition per drone destroyed, compared with...

Robots to Study Sperm Whale Communication|TaiwanPlus News

Scientists from the SETI project have deployed an autonomous underwater glider that locks onto sperm whale clicks and follows the animals in real time, even as they dive to 1.6 kilometers. The system allows researchers to maintain contact over hundreds of...